API Canvases: Are We Using Them Wrong?

Net API Notes for 2023/11/20, Issue 226

By all outward appearances, the workshop had been a success. My API oversight team had gotten the budget to fly in the expert, we had logistical'd all the calendars, and spent two working days filling the conference room walls with colorful stickies. There had been alignment. The stakeholders left, seemingly in high spirits.

However, as I gathered the arrayed paper, markers, and Post-It notes, I sank deeper and deeper into a funk. "Sure, this all looks very impressive," I thought, "but what have we actually accomplished?" Years later, as I reflect on the exercise, I can still remember how I felt in that moment. But I'd be darned if I could remember what we talked about, much less list any long-term impacts that came about from it.

Technologists have developed numerous structured activities to explore a problem space. Many of those have, subsequently, been adapted for use in the API space. In particular, authors and consultants have created all sorts of Canvases. How did these canvases come about, what do they attempt to do, and - perhaps most importantly - are they worth the time and effort to fill out? I cover that and more in this edition of Net API Notes.

A Brief and Incomplete History of API Canvas Modeling

A Business Need

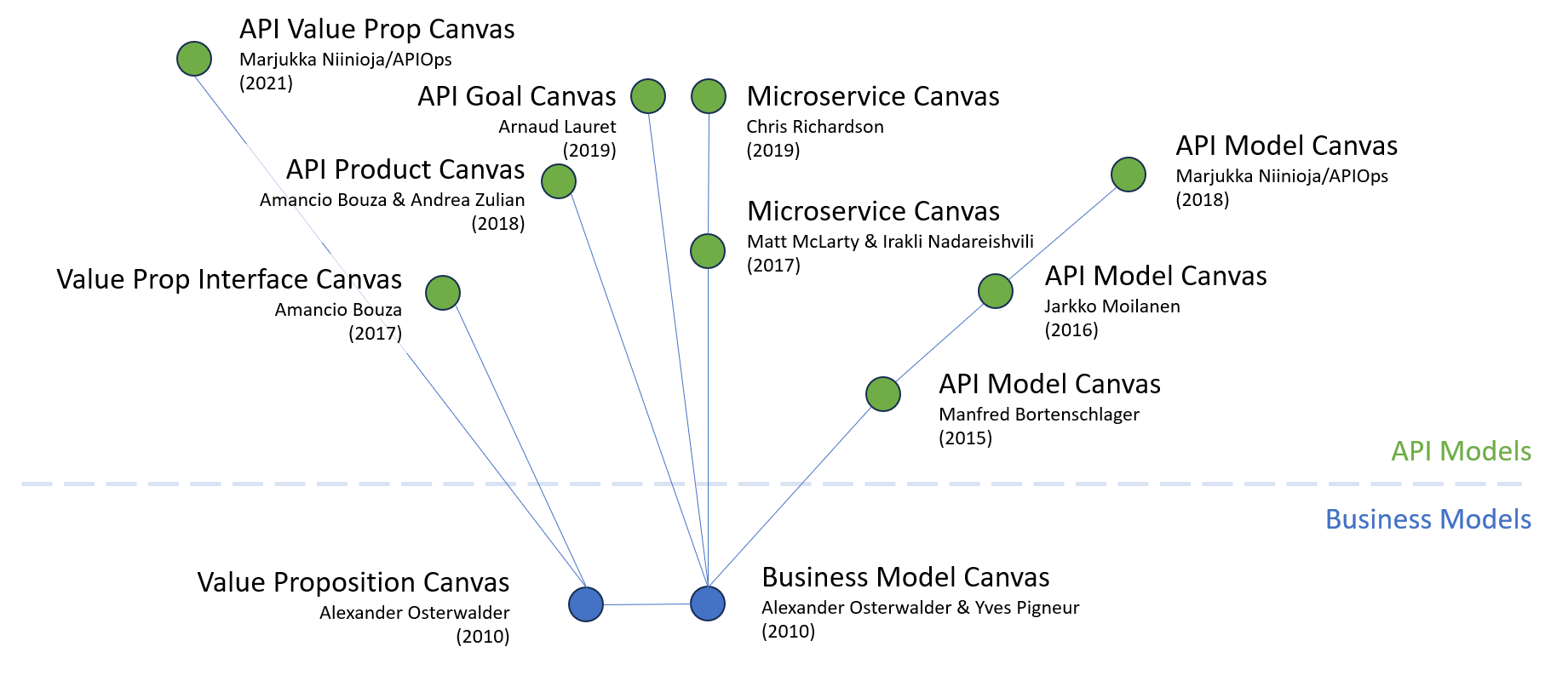

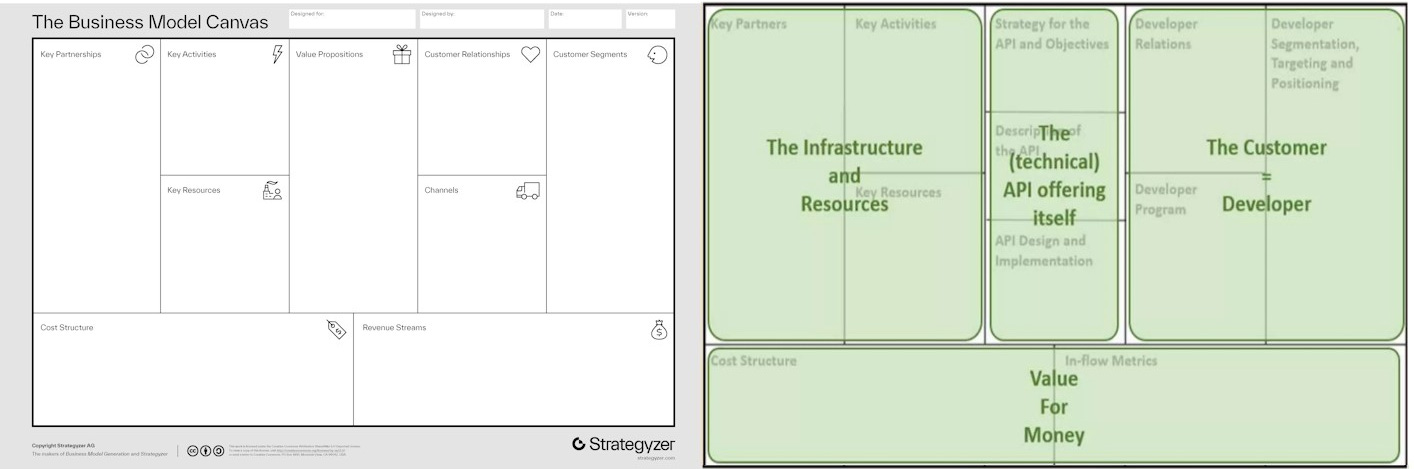

When we talk about the popularity of canvases, we have to start with the OG, the Business Model Canvas. It was developed by Alexander Osterwalder and Yves Pigneur and popularized in their Business Model Generation (2010). Osterwalder also developed a derivative called the Value Proposition Canvas around the same time.

Crossing the Chasm from Business Tool to the API Space

The first API-related Canvas reference I uncovered during research was in a 2015 presentation from Manfred Bortenschlager. As shown below, there are some clear allusions to the Business Model Canvas, but with some tweaks: replacing Customers with Developers and swapping revenue streams for "in-flow metrics".

There were subsequent iterations on how this canvas could be used. Jarkko Moilanen shared his version of the API Model Canvas in a 2016 Nordic APIs article. To this day, the APIOPs website maintains a similar API Model Canvas on its website, a model which mixes some of the original business model canvas customer and revenue prompts while also exhibiting aspects of the developer audience realities.

Microservice Canvas

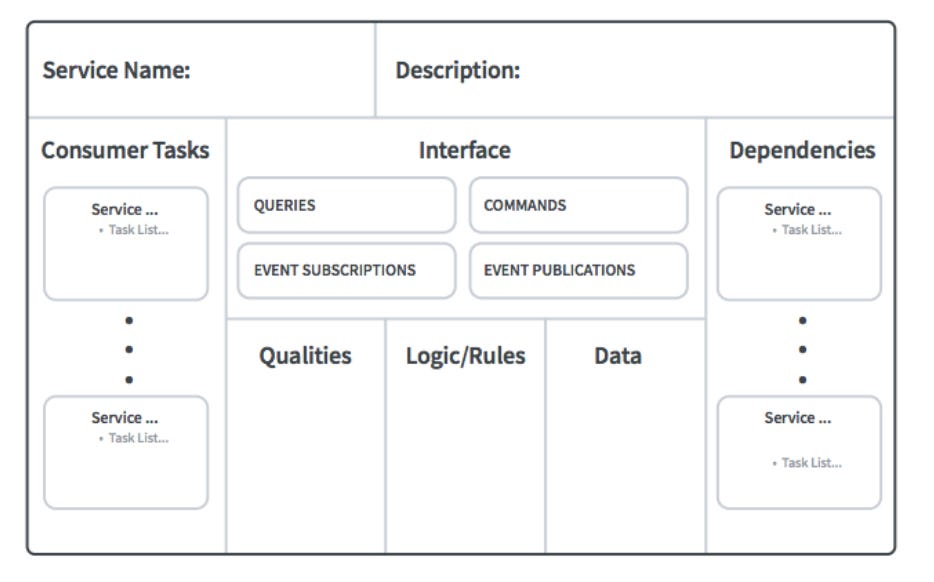

But the API Model Canvas isn't the only variation of the original Business Model Canvas. In 2017, Matt McLarty and Irakli Nadareishvili proposed the Microservice Canvas. As the authors suggested, using the canvas encourages "just enough" upfront design. Microservice designers could use it to capture "essential" service attributes while avoiding the mistakes of excessive, cumbersome planning documentation of other design processes.

In 2019, author and microservice raconteur Chris Richardson proposed his version of the microservice canvas. Richardson's version includes non-functional requirements, combines subscriptions and publications into the same area, and introduces key metrics and health route concepts. As he stated at the time, his version "emphasizes the interface (top of the canvas) and the dependencies (bottom of the canvas) and de-emphasizes implementation (middle of the canvas)".

Other API Canvases

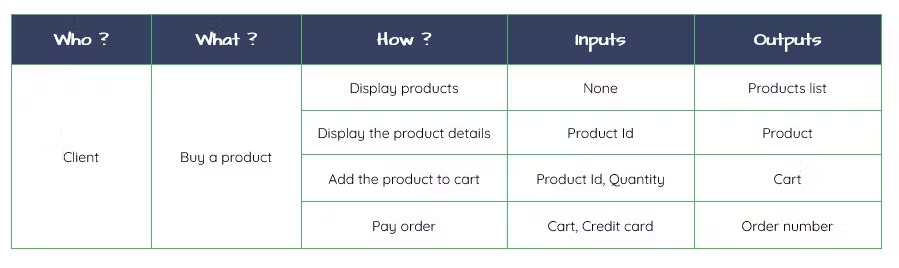

While researching this piece, I also came across a handful of other API canvases. In his 2019 book, The Design of APIs, Arnaud Lauret proposed the API Goal Canvas. Much like the Microservice Canvas variations, Lauret's intention is to provide a lightweight discovery process for API creators to answer:

- Who uses the API?

- What can they do with it?

- How will they do that?

- What do they need (the inputs)?

- What do they get in return (the outputs)?

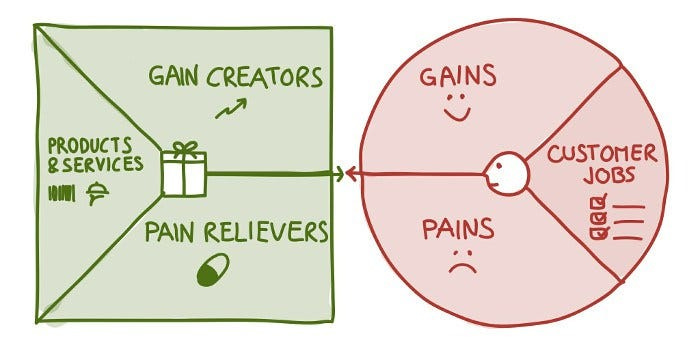

Dr. Amancio Bouza is associated with not one but two API Canvas alternatives. The first, the Value Prop Interface Canvas, captures how the API provider creates customer value. It closely resembles the Value Proposition Canvas that Alexander Osterwalder conceived of for the business context.

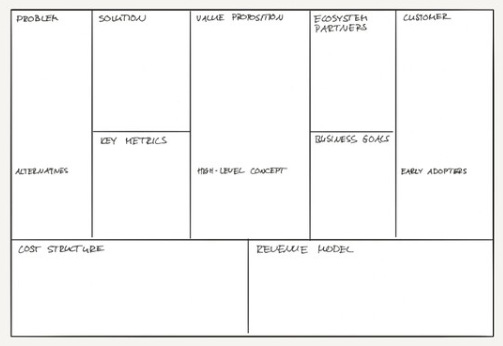

Bouza also encouraged the usage of an API Product Canvas. It attempts to get API designers thinking more broadly about what they are creating - not just "problem/solution-fit" but the trickier "problem/market-fit".

And if this looks a whole lot like where we started, you're right! We're back to a subtle variation of the original Business Model Canvas that started this all.

This Isn't the Stuff of Groundbreaking Revelations, Is it?

Much of the information provided here isn't exactly revelatory. Listing partners or putting a health endpoint into a box is pretty trivial. Capturing information in this way comes with all the downsides of other technical documentation (API or otherwise):

- Lack of Clarity and Detail: OpenAPI became as ubiquitous as it is in the space not only because stakeholders could quickly iterate over the text-based artifact but the description was helpful beyond the initial exercise (generating documentation, code, gateway configurations, etc). While these canvases are useful for raising initial questions and prompting discussions, the lack of additional clarity and detail limits their continued usefulness.

- Missing Iteration Mechanism: Did you ever get something right the first time? No! Me either! Success is often an ongoing set of course corrections made when new information presents itself. We're never going to know all the answers in the beginning, so we must have a means for revisiting and revising what we are doing. Canvas work too easily falls into "big bang" or "kickoff" type of activities - events done at the onset when enthusiasm is at its highest and never reviewed again. That leads to-

- Outdated Information: Few folks have an appetite for discussing long-term tool management. However, organizations change vendors all the time and that impacts the long term durability of artifacts. A team may start out customizing Miro's Business Model Template and, one CIO change later, discover they no longer have licenses. Maybe (maybe) some forward-thinking team member exported everything to PDF and reshared it back to the corporate CMS. Which, as historical artifacts go, is better than nothing. But that's not a toolchain that is easy to revisit and update. The similar, more-spreadsheet-like canvases have a better shot of being maintained, but, let's be honest - those are probably good-faith efforts that evaporate as soon as the saint doing the work gets busy. In either case, you end up with something that isn't maintained.

- Inconsistency: The value of what goes into these canvases is highly correlated to the quality of the facilitator leading the discussion. Getting people out of their inboxes and into a meaningful debate is a real skill. Knowing when to probe and when to let an audience mull over something just said takes experience. And, unfortunately, there's not a ton of folks like that. Missing stakeholders' needs is already pretty bad, but then there are the simple inconsistencies that accrue from different facilitators in terminology, structure, or presentation.

These API Canvas variants are supposed to help API stakeholders quickly explore the viability of their interfaces visually. However, approaching them with a "completionist" mindset and expecting them to be a durable, long-term artifact gets teams in trouble. If they become a required element of a design process, they go from being an aid to an elaborate waste of money, energy, and time.

To Succeed, Stop Treating These As Boxes to Be Checked

A canvas's biggest benefit may occur when teams don't try to fill the entire thing. Some of these examples already feel they're trying to do too much - as in the case of Richardson's Microservice Canvas example, he emphasizes the interface and dependencies while de-prioritizing the implementation. It isn't that the implementation isn't important - it is just that it is best left to better sources of that documentation. Got interface details, descriptions, or business rules consumers need to know? Put those in an OpenAPI description.

However, using canvases to flesh out broader business, product, or dependencies is an option. Used in this way, they can help teams identify and articulate crucial aspects like flow metrics or non-functional requirements. In fact, I'd go further: rather than striving to complete every section exhaustively, teams should leverage the canvas to zero in on particular areas of interest or concern, and ignore the rest. Business reality is that everyone is busy, and nobody is sitting around waiting to be asked to "fill out a canvas". However, they do have questions that need to be answered. Moving around the canvas we can quickly identify where the team is certain and where they might need to hold up and have a conversation.

As an added bonus, keeping things small and pointed, rather than the multi-day "solve all the things" exercise, reduces many of the risks that I outlined above.

Conclusion

Treat any API canvas as a flexible tool, not a rigid framework.

In practice, the strength of a canvas lies in its ability to guide focused discussions and generate insights on specific pain points. It's not about filling in every blank space; it's about identifying gaps in understanding or strategy and using the canvas to collaboratively explore and resolve these areas. By doing so, the canvas transforms from a static checklist into a dynamic prompt for strategic thinking and problem-solving.

Are you currently using any of these canvases in your work? What pros and cons have you discovered? Are there more API-related canvases that I haven't covered? I'd love to hear how you've found success.

Milestones

- Apiture announced an additional $10 million in funding from T. Row Price, among others.

- The finalists for the API DevPortal Awards Gala have been announced.

- Joining such online luminaries as Maggie May Fish or Brian David Gilbert, AsyncAPI now has its own merch store.

- Treblle launched API Insights, its letter-grade evaluations of an API. Like Zuplo's "Rate my Open API" you provide an OpenAPI description and receive feedback. I am interested in seeing how these efforts differentiate themselves from, say, Spectral linting.

- After a big developer event, OpenAI's APIs and ChatGPT interface experienced significant disruptions. A Russian-affiliated hacking group claimed to be behind the DDOS attempt, but then board-level amateur-hour drama ensued, and everybody forgot about it.

- You probably know Phil Sturgeon - when he isn't saving the planet while planting trees or circumnavigating Europe with nothing but a bike and a smile, he's authoring API books or overseeing the APIs You Won't Hate Slack community. Phil is currently looking to pick up some work to help support his plant-saving habit. If you need some input from a bonafide API expert, call Phil.

Wrapping Up

The second ¡APIcryphal! piece for paid subscribers is now available! It delves deep into former OAuth lead Eran Hammer's declaration that OAuth 2.0 is "the road to hell". It pairs nicely with my exploration of API Auth from Issue #225. Was he right? Where are we at more than a decade after that dire prediction? Paid subscribers can see and judge for themselves.

What has been so gratifying about these pieces, as well as the one I've been baking for December, is being able to retell these formative stories for new API audiences. What's not as nice is discovering just how much of this context can only be pieced back together from archived Wayback Machine sources. I get that CMS systems change, and authors move on to other interests. However, it isn't easy to learn that history when bitrot constantly claims it. And I strongly believe that "those who do not learn history are doomed to repeat it".

Is a 2,000-word rumination on something said about OAuth in 2012 commercially viable today? Not without some Taylor Swift tie-in, it isn't. But that doesn't mean it isn't important. Paying subscribers makes this work to preserve our collective heritage possible. And when they're not encouraging me to tease a narrative out of the tangle of memory, they also ensure that Net API Notes editions remain free of paywalls and advertising.

To become a paid subscriber, head to the subscription page. For more info about what benefits paid sponsorship includes, check out this newsletter's 'About' page.

Till next time,

Matthew (@matthew in the fediverse and matthewreinbold.com on the web)